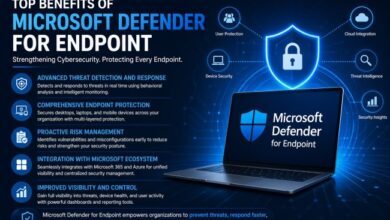

The Evolution of AI in Music Creation: From Text to Fully Produced Songs

Artificial intelligence (AI) has transformed numerous creative industries, and music is no exception. From assisting with audio mastering to composing entire symphonies, AI tools are redefining how music is created, produced, and consumed. What once required extensive training, expensive studio equipment, and collaborative teams can now begin with something as simple as a written idea. As natural language processing and machine learning models continue to evolve, they are enabling new ways for individuals to translate thoughts, emotions, and stories into sound.

Turning Words into Music: The Rise of Text-Based Composition

One of the most remarkable advancements in AI-driven creativity is the ability to generate music directly from written prompts. With a concept, mood description, or even a short paragraph, users can initiate the composition process. Tools such as Text to Song AI systems analyze the structure, emotion, and context of written language and translate that information into melody, harmony, rhythm, and sometimes even vocals.

These systems rely on large datasets of existing music and lyrics to learn patterns in composition. By recognizing how different musical elements correspond to certain emotional tones or thematic cues, AI can create songs that reflect the user’s intent. For example, a prompt describing “a calm sunset by the ocean” may result in soft instrumentation, slower tempos, and ambient textures.

This approach lowers the barrier to entry for aspiring musicians. Individuals without formal music theory knowledge can experiment with songwriting, while experienced artists can use AI-generated drafts as creative starting points. Importantly, these systems function as tools that assist creativity rather than replace it, offering inspiration and structural support.

The Technology Behind AI-Generated Music

AI music generation is powered by several advanced technologies, primarily machine learning and deep neural networks. At the core are models trained on vast libraries of audio recordings, MIDI data, and lyric databases. These models learn patterns in melody, chord progression, rhythm, and lyrical phrasing.

Two significant technologies often used in music AI systems are:

- Natural Language Processing (NLP): Enables AI to interpret text prompts and extract themes, mood, and narrative elements.

- Generative Models: Such as transformer-based architectures and recurrent neural networks, which predict sequences of notes or words based on learned patterns.

When a user enters a text description, the AI processes semantic cues and translates them into musical parameters. For instance, words like “energetic” or “melancholic” influence tempo, key selection, and instrumentation. Some advanced systems also incorporate voice synthesis, allowing them to generate realistic-sounding vocals.

The integration of these technologies creates a pipeline where written language evolves into a structured musical piece, often within minutes.

Expanding Accessibility in Music Creation

AI-powered composition tools are making music creation more accessible than ever before. Traditionally, producing a polished song required technical skills in composition, arrangement, recording, and mixing. Today, AI tools simplify many of these steps.

This democratization benefits several groups:

- Aspiring Artists: Beginners can experiment without investing heavily in equipment or formal training.

- Content Creators: Podcasters, video producers, and marketers can quickly generate custom background music.

- Educators and Students: AI can serve as a teaching aid, demonstrating musical concepts in real time.

Accessibility also promotes experimentation. Users can explore genres outside their comfort zone, adjust tempo and style instantly, and iterate rapidly. Rather than replacing musicians, AI often acts as a creative collaborator that accelerates workflow and reduces technical barriers.

Creative Collaboration Between Humans and AI

Despite concerns about automation, AI in music creation is best understood as a collaborative partner. Human creativity remains central to defining artistic vision, emotional nuance, and storytelling depth.

Many musicians use AI to:

- Generate initial chord progressions or melodies.

- Brainstorm lyrical themes.

- Produce demo tracks for refinement.

- Experiment with alternative arrangements.

The human artist then edits, personalizes, and enhances the output. This iterative process blends computational efficiency with human intuition. AI can suggest combinations that might not occur naturally to a composer, sparking new directions in style or structure.

In this sense, AI acts similarly to a digital co-writer—efficient in generating possibilities but reliant on human judgment to select and refine the final piece.

Ethical Considerations and Copyright Challenges

As AI-generated music becomes more widespread, ethical and legal questions emerge. Since these systems are trained on extensive datasets of existing music, concerns about originality and copyright arise.

Key issues include:

- Training Data Transparency: Are copyrighted works included in training datasets?

- Ownership Rights: Who owns a song generated by AI—the user, the developer, or both?

- Creative Attribution: Should AI systems be credited in songwriting?

Different jurisdictions are still developing legal frameworks to address these questions. In many cases, AI-generated works are treated differently depending on the degree of human involvement. If a user significantly modifies or directs the creative process, they are more likely to claim ownership.

Ethical considerations also extend to artistic authenticity. While AI can mimic styles, questions remain about whether machine-generated compositions carry the same emotional depth as human-created works. Ongoing discussion and regulation will shape how AI integrates responsibly into the music industry.

The Future of AI in the Music Industry

The trajectory of AI in music suggests continued innovation. Future systems may offer even more sophisticated capabilities, such as real-time collaboration, adaptive soundtracks for interactive media, and personalized songs tailored to individual listeners.

Emerging trends include:

- Real-Time Generation: AI producing music dynamically during live performances or gaming experiences.

- Personalized Soundtracks: Music that adapts to a listener’s mood or activity.

- Advanced Voice Modeling: Hyper-realistic vocals with nuanced expression.

As computing power increases and datasets expand, AI-generated music will likely become more complex and emotionally expressive. However, human creativity will remain essential in guiding artistic direction and cultural meaning.

Rather than replacing musicians, AI appears poised to redefine the creative landscape—providing tools that expand possibilities and inspire new forms of artistic expression.

Conclusion

Artificial intelligence has reshaped the process of music creation, transforming written ideas into fully realized compositions. Innovations like Text to Song AI illustrate how natural language and machine learning can intersect to produce structured, emotionally resonant music from simple textual prompts. These systems enhance accessibility, encourage experimentation, and support collaboration between humans and technology.

While challenges related to ethics and copyright remain, the overall impact of AI in music is one of expansion rather than replacement. As the technology continues to mature, it will likely serve as a powerful creative partner—enabling artists, educators, and creators to explore new sonic landscapes with unprecedented ease.